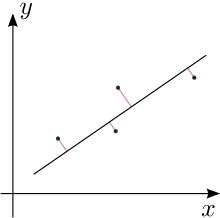

Linear regression is the process of identifying a line/curve – hypothesis using the training data which represents the relation between feature variables and the target variable. In an example of determining the price of a house, given its area, then linear regression finds the relationship between the area and the price of the house. This line/curve should be of minimum error.

The minimum error is determined using loss function and the parameters. The parameters are varied to get the most minimum value of loss function. Basically, loss function denotes the difference between actual target variable value and computed variable value through the equation of hypothesis.

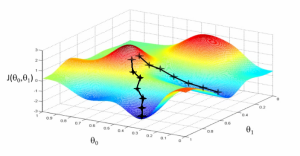

Gradient Descent is one of the method in linear regression which is used to find minimum value of loss function. In an iterative method, the parameters are varied and the equation is computed for a minimum value. This might lead to local or global minimum

The rate in which the steps are taken towards minimum is determined by learning rate. This has to be defined while training the algorithm. Once this hypothesis is finalized, then any new data passed to the algorithm, the hypothesis will be applied and the value would be calculated.